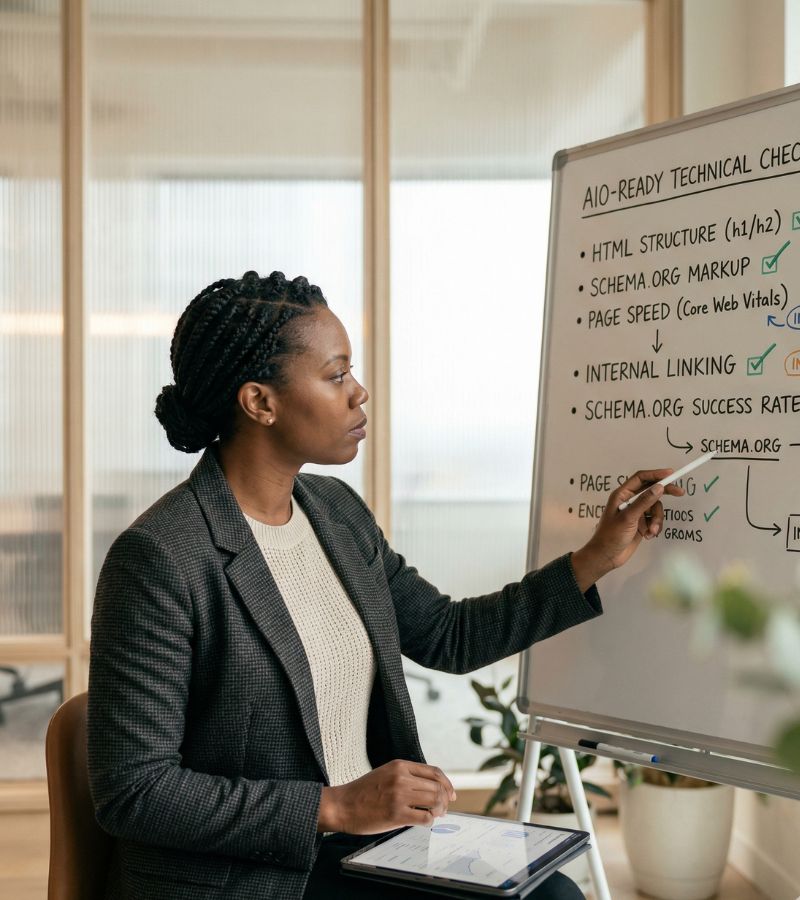

The Quick Rundown

- 78% of businesses report zero visibility in AI-generated answers despite having functional websites and reasonable organic rankings, and the problem is almost always technical, not content-related.

- GPTBot, ClaudeBot, and PerplexityBot do not execute JavaScript, meaning any content rendered client-side is invisible to them even if Googlebot indexes it perfectly.

- Pages with LCP under 2.5 seconds are 1.47x more likely to appear in AI-generated outputs; pages with CLS under 0.1 have 29.8% higher AI inclusion rates.

- Schema markup improves AI extraction accuracy by approximately 30%, and FAQPage and HowTo schema are the highest-priority types for most sites.

- The five audit layers to check in order are: crawler access (robots.txt), rendering architecture (JavaScript dependency), schema markup (Rich Results Test), content structure (BLUF formatting, FAQ blocks), and trust signals (author bios, dates, citations).

- Blocking AI crawlers in robots.txt is a business decision with real consequences. 21% of the top 1,000 websites block GPTBot, but doing so eliminates any chance of appearing in ChatGPT responses.

- AI Overviews citations are strongly correlated with top-3 organic rankings, but not guaranteed by them. Technical AIO readiness is the differentiating factor between pages that rank and pages that get cited.

- This checklist can be completed in 15 minutes for most sites; the fixes identified typically take 1-3 days to implement and show measurable citation improvement within 4-8 weeks.

Most websites that fail to appear in Google AI Overviews are not failing because of poor content quality. They are failing because of fixable technical gaps that prevent AI systems from accessing, parsing, and trusting the content in the first place. According to Ziptie’s March 2026 research, 78% of businesses report zero visibility in AI-generated answers despite having functional websites and reasonable organic rankings. The gap between traditional SEO readiness and AI search readiness is real, measurable, and closeable in under 15 minutes for most sites.

This checklist is organized into five timed segments. Each segment targets a distinct layer of AIO readiness: crawler access, rendering architecture, schema markup, content structure, and trust signals. Work through each segment in order, since failures at earlier layers block the value of later optimizations entirely.

Minutes 1-3: Crawler Access Audit

The first question AI systems ask about your site is not “is this content good?” It is “can I access this content at all?” Google’s Googlebot powers AI Overviews retrieval, while third-party AI platforms use their own crawlers: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot (Perplexity AI), and Bingbot (Microsoft Copilot).

Check 1: Open your robots.txt file

Navigate to yourdomain.com/robots.txt. Look for any of the following patterns that would block AI access:

- Disallow: / under User-agent: * (blocks all bots)

- Explicit blocks on User-agent: GPTBot, User-agent: ClaudeBot, or User-agent: PerplexityBot

- CDN or WAF rules (Cloudflare, Akamai) that may be blocking AI crawlers at the network level

Check 2: Verify your sitemap.xml exists

Navigate to yourdomain.com/sitemap.xml. If you receive a 404 error, your site is not providing AI crawlers with a structured content map. Most CMS platforms (WordPress with an SEO plugin, Shopify, Squarespace) can generate this automatically.

Check 3: Confirm your site is indexed

Run a site:yourdomain.com search in Google. If your key pages do not appear, they cannot be cited in AI Overviews regardless of content quality. Check for accidental noindex meta tags on important pages using your browser’s “View Page Source” function and searching for noindex.

Time check: This segment takes approximately 2-3 minutes. If you find blocking rules in robots.txt, note them for immediate remediation but continue the audit.

Minutes 4-6: Rendering Architecture Check

AI crawlers cannot execute JavaScript. This is the single most underestimated technical barrier to AI visibility. Sites built on React, Vue, Angular, or other client-side rendering frameworks present a near-empty HTML shell to AI crawlers. The content users see in their browsers loads after the crawler has already moved on.

Check 4: Disable JavaScript and reload your key pages

In Chrome, open DevTools (F12), go to Settings, and disable JavaScript. Reload your most important pages. What you see is approximately what AI crawlers see. If your main content, headings, and body text disappear, you have a rendering problem that must be resolved before any other optimization will take effect.

Check 5: Review your rendering approach

| Approach | How It Works | Best For |

| Server-Side Rendering (SSR) | Full HTML generated on server before delivery | New builds or major refactors |

| Pre-rendering | Static HTML generated at build time for bot user agents | Content-heavy sites with infrequent updates |

| Hybrid / ISR | Combines SSR for initial load with client-side for interactivity | Complex apps needing both UX and crawlability |

| Dynamic Rendering | Serves static HTML to bots, dynamic to users | Quick fix for existing CSR sites |

SSR is the recommended long-term approach. If a full migration is not practical, pre-rendering for AI crawler user agents provides a viable intermediate step.

Check 6: Test raw HTML page weight

Use “View Page Source” (not Inspect Element, which shows the rendered DOM) and check the file size. According to SALT.agency data cited by Ziptie, AI crawlers abandon approximately 18% of pages exceeding 1MB in raw HTML weight. Keep your HTML under 1MB.

Time check: This segment takes approximately 2-3 minutes. JavaScript rendering issues require developer involvement to fix, but identifying them now is the critical first step.

Minutes 7-9: Schema Markup Validation

Schema markup improves AI search visibility by approximately 30% by helping LLM crawlers extract and parse structured content through RAG (Retrieval-Augmented Generation) systems. JSON-LD structured data explicitly defines entities and content relationships, making pages more likely to be retrieved and cited. A BrightEdge study found that sites with structured data plus FAQ blocks saw a 44% increase in AI search citations, and FAQPage schema had a 67% citation rate in AI responses.

Check 7: Run Google’s Rich Results Test

Navigate to search.google.com/test/rich-results and enter your homepage URL. A passing result shows multiple detected items, especially a specific business type like Organization, LocalBusiness, or ProfessionalService with green checkmarks. A failing result shows “No items detected” or only a generic Website item.

Check 8: Verify the priority schema types are implemented

| Schema Type | AI Search Purpose | Priority |

| Organization | Establishes entity identity; feeds knowledge graph disambiguation | Critical |

| Article | Identifies content type, author, publication date, topic | Critical |

| FAQPage | Surfaces Q&A pairs that map directly to conversational queries | High |

| HowTo | Structures procedural content into extractable steps | High |

| BreadcrumbList | Helps AI engines understand content hierarchy and topical structure | Medium |

Check 9: Confirm JSON-LD format and entity consistency

Schema markup should use JSON-LD format (not Microdata or RDFa). Verify that your author and organization entities are consistently defined across your site, and that the @id attribute uses a stable URI (typically your homepage URL or a dedicated entity page URL). Use the sameAs property to link to your Wikipedia page, Wikidata entry, LinkedIn profile, and other authoritative external references.

Time check: This segment takes approximately 2-3 minutes. Schema validation is one of the highest-ROI fixes available for AIO readiness.

Minutes 10-12: Content Structure Assessment

AI systems pull individual passages, not entire pages. The Retrieval-Augmented Generation architecture that powers AI Overviews retrieves the most relevant text chunks from your pages and synthesizes them into answers. Pages that are structured for human narrative flow often fail AI extraction because the relevant answer is buried paragraphs deep.

Check 10: Apply the BLUF test to your key pages

BLUF stands for Bottom Line Up Front. For each important page, ask: does the primary answer to the page’s core question appear in the first 100 words? LLMClicks’ study of 30 queries found that 90% of winning citations answered the query within the first 100 words of the page. Scroll to the top of your page and read the opening paragraph. If it is introductory context rather than a direct answer, the page needs restructuring.

Check 11: Check heading extractability

Each H2 heading should answer one intent only and be specific enough to stand alone as a meaningful label. Vague headings like “Overview” or “Key Points” reduce AI reuse potential even when the underlying content is strong. Stridec’s 2026 AI SEO audit framework identifies heading extractability as a distinct audit checkpoint because AI systems use headings as retrieval anchors.

Check 12: Verify FAQ blocks exist on informational pages

Pages targeting informational queries should include a dedicated FAQ section with question-format H3 headings followed by direct, concise answers. This structure maps directly to conversational AI queries and is one of the most reliable citation triggers available. The FAQ section should also be marked up with FAQPage schema (per Check 8).

Time check: This segment takes approximately 2-3 minutes. Content restructuring is the most time-intensive fix but can be prioritized by page importance.

Minutes 13-15: Trust and Performance Signals

Even technically accessible, well-structured content can fail to earn AI citations if it lacks the trust signals that AI systems use to evaluate source credibility. These checks take under two minutes and surface issues that are often invisible in standard SEO audits.

Check 13: Run PageSpeed Insights on your key pages

Navigate to pagespeed.web.dev and test your most important pages. The AI-specific performance thresholds that correlate with citation rates, according to SALT.agency data, are:

| Metric | Target Threshold | Impact on AI Visibility |

| Largest Contentful Paint (LCP) | 2.5 seconds or under | 1.47x more likely to appear in AI outputs |

| Cumulative Layout Shift (CLS) | 0.1 or under | 29.8% higher inclusion rate in generative summaries |

| Total Page Load Time | Under 3 seconds | Recommended ceiling for AI bot accessibility |

Check 14: Verify author and expertise signals

AI systems evaluate E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals as part of their source selection process. Check that your key pages include: a named author with a linked bio page, the author’s credentials or relevant experience stated explicitly, a clear publication date and last-updated date, and citations or links to primary sources for factual claims.

Check 15: Confirm HTTPS and mobile responsiveness

Navigate to your site using http:// (without the S) and verify it redirects to https://. Then use Google’s Mobile-Friendly Test at search.google.com/test/mobile-friendly to confirm your pages display correctly on mobile. Most AI crawlers use mobile user agents, so pages with broken mobile layouts or hidden content on smaller screens are penalized in AI retrieval.

Time check: This segment takes approximately 2-3 minutes. Performance and trust fixes range from immediate (adding author bios) to developer-dependent (Core Web Vitals improvements).

Your AIO Readiness Score

After completing all 15 checks, tally your results using the scoring guide below.

| Checks Passed | AIO Readiness Level | Recommended Action |

| 13-15 | Strong | Monitor AI citations monthly; focus on content freshness and topical depth |

| 10-12 | Moderate | Prioritize schema markup and content restructuring within 30 days |

| 7-9 | Weak | Address rendering architecture and crawler access issues immediately |

| 0-6 | Critical | Conduct a full technical SEO audit before any content investment |

The 30-Day Fix Priority Order

Not all fixes are equal in effort or impact. If your audit reveals multiple failures, address them in this sequence:

Week 1: Unblock access

Fix robots.txt blocking rules, resolve noindex errors, submit sitemap to Google Search Console. These fixes cost nothing and can show impact within 4-8 weeks per Recomaze AI’s timeline data.

Week 2: Fix rendering

If your site uses client-side JavaScript rendering, work with your development team to implement pre-rendering or SSR for your highest-value pages. This is the highest-impact technical fix for AI visibility.

Week 2-3: Implement schema

Add Organization, Article, and FAQPage schema to your key pages using JSON-LD. Validate with the Rich Results Test after each implementation.

Week 3-4: Restructure content

Apply the BLUF principle to your top 10 pages by traffic. Move the direct answer to the opening paragraph, restructure headings for extractability, and add FAQ sections where appropriate.

Month 2: Build trust signals

Add or improve author bio pages, update publication dates, add citations to primary sources, and begin a digital PR program to earn third-party brand mentions. According to Omniscient Digital’s study of 23,000+ AI citations, earned media accounts for 48% of all LLM citations, while owned content accounts for only 23%.

What This Checklist Does Not Cover

This 15-minute audit covers the technical and structural foundations of AIO readiness. It does not cover topical authority depth (the breadth of your content coverage on a given subject), entity disambiguation (whether AI systems correctly identify your brand in knowledge graphs), or competitive citation analysis (which competitors are being cited instead of you for your target queries). Those require a full GEO audit, which is a separate, more intensive process.

The 15-minute checklist is a triage tool. It tells you whether your site has the minimum technical prerequisites for AI citation consideration. Passing all 15 checks does not guarantee citation; failing any of the first six checks almost certainly prevents it.

Frequently Asked Questions (FAQs)

Run the full checklist quarterly, or immediately after any major site migration, CMS update, or CDN configuration change. Crawler access and rendering issues can be introduced silently by platform updates.

No. This checklist removes technical barriers to citation eligibility. Whether your content is actually cited depends on content quality, topical authority, E-E-A-T signals, and competitive factors that this checklist does not evaluate.

Focus on information gain – the degree to which your content adds something not available in competing sources. AI systems are trained to prefer content that provides unique data, original analysis, or perspectives not found elsewhere. Review your top target queries and ask honestly whether your content says anything that the top 5 ranking pages do not already say.

The llms.txt standard (proposed by Jeremy Howard) is a markdown file placed at your site’s root that guides AI systems to your most important content. OpenAI’s crawler accounts for over 94% of llms.txt crawling activity on sites that have implemented it. However, SE Ranking’s study of 300,000 domains found no measurable correlation between llms.txt presence and citation rates. It is a low-effort addition worth implementing, but it should not replace the technical fixes in this checklist.